| October 2005 Index | Home Page |

Editor’s Note: This study uses a new kind of measuring tool, the Spatial Probability Measure (SPM), to detect gender bias and compare its gender related component to multiple-choice and other forms of testing.

Gender as a Variable

in Graphical Assessment

David Richard Moore

Abstract

This paper presents the Spatial Probability Measure (SPM). The SPM is an adapted assessment instrument that attempts to ascertain a learner’s strength of response or response certitude relative to other options. This instrument is unique in that it has many of the characteristics of a multiple-choice assessment instrument but differentiates itself by collecting data from a continuum instead of the discrete options provided by multiple-choice. In particular, the issue of gender is examined. The question the study seeks to address is; does gender influence the degree to which the variable response-certitude is expressed through the SPM instrument. Results indicate that gender is not a significant factor in responding to the SPM. The SPM may be of interest to creators of computer-based instructional software because it retains much of the efficiency of the multiple-choice technique while potentially providing designers with additional useful information that can be used to adapt instruction.

Keywords: instruction, technology, computer-based instruction, assessment, gender, user-interface, spatial probability measure, multimedia

Introducing the Spatial Probability Measure

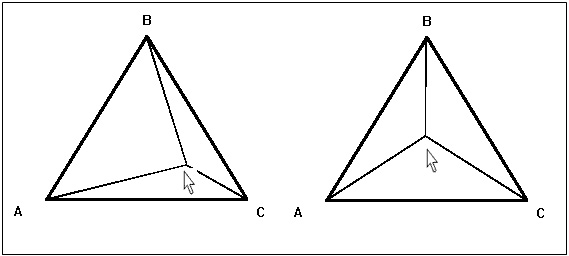

The Spatial Probability Measure (SPM) is an assessment instrument adapted by Moore (2005). In general the instrument, displayed in Figure 1, provides the opportunity for a learner to express varying degrees of certitude in their response by selecting a position within the triangle.

Figure 1. Spatial Probability Measure (Moore, 2005)

The cursor in 1a suggests that the learner favors answer C relative to the others, while the cursor in 1b suggest the learner’s is neutrally uncertain. In contrast to multiple-choice questions, the SPM allows learners to express their opinion on a continuum, potentially providing a more accurate assessment of their knowledge (Bruno, 1987; Klinger, 1997). Landa (1976) calls this type of instrument an Admissible Scoring System; he states, “An admissible scoring system…. enables and encourages the student to give honest answers to all questions, freely and frankly identifying the gaps in his knowledge” p.14.

One potential advantage of this system is that not only is confidence in an answer expressed but also additionally the relative correctness of all the distracters is expressed.

Gender Effects and Response Certitude

Gender has often arisen as a significant factor in instructional settings and with instructional technology in particular. Technology has been viewed as having the potential to encourage performance differences on the basis of gender (Mangione, 1995). Gender effects are often a controversial topic in the literature base (Kirk, 1992). In particular, there is some evidence that, performance differences in standardized multiple-choice tests can be attributed to gender (Wainer & Steinberg, 1992). On multiple-choice type tests women appear to changes their responses more often than men (Skinner, 1983). Additionally, studies show that the probability of guessing on multiple-choice tests is correlated with gender (Ben-Shakhar, & Sinai, 1991).

The SPM instrument presents a number of separate variables that may be influenced by gender. Since the instrument is delivered through the Internet it may be of concern that a few studies have shown that males have in general greater skill and experience with computers (Reinen & Plomp, 1993; Scragg & Smith, 1998; Rajagopal & Bojin, 2003). Secondly, the SPM instrument attempts to ascertain a measure of response certitude relative to available choices. Response certitude is a measure of one’s confidence in one’s response and is related to one’s knowledge base and experience but additionally may be an inherent learner characteristic (Kulhavy & Stock, 1989; Mory, 1991). Response certitude may be influenced by gender (Linn & Hyde, 1989). Of particular interest is the suggestion that females may be less likely to guess when responding to testing (Linn, De Benedictis, Delucchi, Harris & Stage, 1987).

Thirdly, the nature of the SPM instrument is requires somewhat of a spatial mechanical response which there is some evidence that females may perform such tasks in certain circumstances with less success than their male counterparts (Casey, Nuttall, R.L. & Pezaris, 2001). While these gender effects are often reported there is some evidence that these effects are minimal if not absent (Linn & Hyde, 1989).

Method

Purpose

The purpose of this study was to determine whether gender influences response certitude as measured by the SPM instrument.

Participants

The participants for this study were undergraduate students in the College of Education at Ohio University. Students were offered the opportunity to participate self-selected from four separate sections of an Introduction to Instructional Technology course. Sixty-two students participated in this study. Participants declared knowledge of the study’s subject matter as being very low.

Materials

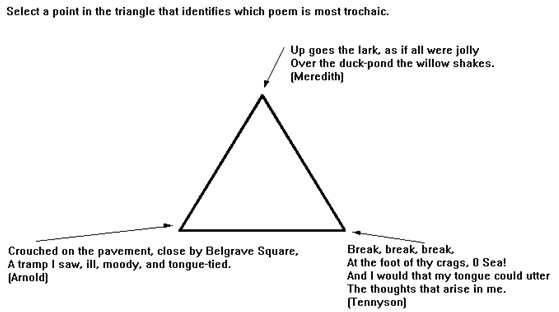

The SPM instrument used to collect data was created using Macromedia’s Authorware™ multimedia environment. The instrument, presented content, and tracked results. Participants where presented a brief tutorial on the trochaic poetry form (chosen for its unfamiliarity among participants. At the completion of the tutorial participants were presented with 15 classification questions delivered through the SPM response triangle. Each question was displayed in a similar format as Figure 2. The instrument was delivered over the Internet. Data was collected and recorded to a database through a secure Internet connection.

Figure 2. Presentation of Questions (Moore, 2005).

Procedure

Participants where asked to log onto the experiment program. All participants received a demonstration on how to respond to the SPM instrument. The program then randomly assigned participants to either a non-instruction or an instructional intervention group. The instructional group received instruction and examples of trochaic poetry concepts. The examples provided in the instructional treatment were not repeated in the assessment portion of the experiment. The subject matter for this experiment was trochaic meter in poetry. Treatments and assessment items were similar to those used in a previous study by (Merrill & Tennyson, 1971). The treatment was presented on the screen for three minutes. This concept presentation was followed by the SPM instrument that asked the participant to select the correct un-encountered example of the concept in question. There were fifteen questions in the SPM assessment. All participants received the same questions in the same order. The results where then transmitted to the researcher through a secure e-mail system and included a unique participant number to identify the participant’s gender, as well as their scores on the post-assessment. The hypothesis tested was that learners of different gender would respond with different response certitude measured by a smaller pixel distance.

Results

Data for each gender was collected and placed into Table 1.

Statistical tests used

The data was then submitted to a standard one-way ANOVA statistical test to provide a basis for determining if the differences between males and females were significant enough to warrant the assertion that one gender responded with more certitude than the other using the SPM instrument. The data from the ANOVA computation is described in Table 2.

Table 1

Average pixel distance from correct corner

Gender | Count | Sum | Average pixel distance from correct corner | S.D. |

Female | N=40 | 4300.29 | M=107.51 | 15.94 |

Male | N=22 | 2498.46 | M=113.57 | 16.37 |

Table 2

ANOVA results

ANOVA |

|

|

|

|

|

|

Source of Variation | SS | df | MS | F | P-value | F crit |

Between Groups | 521.0979 | 1 | 521.0979 | 2.01 | 0.16 | 4.00 |

Within Groups | 15541.89 | 60 | 259.0315 |

|

|

|

Total | 16062.99 | 61 |

|

|

|

|

Analysis of Data

Females recorded, on average, a smaller pixel distance than their male counterparts. However, this difference is not significant according to statistical computation. The ANOVA test revealed an F value of 2.01 and a corresponding P-value of .16. The P-value is above the selected alpha value of .05 which suggests that neither gender is more likely to express certitude than the other using the SPM instrument.

Discussion

The use of the SPM in this experiment indicates that gender does not contribute to different levels of response certitude when using the SPM instrument. This finding, although limited in scope, provides evidence that the SPM may be used universally and the results are not unduly influenced by predispositions to express ones response certitude based on gender alone.

The results run contrary to a variety of studies that identify gender as a significant variable in the use of assessments instruments, delivered traditionally and through technology. The results in this study may be attributable to a number of factors including, a potentially savvy sample of technology users, and a comfortable and non-threatening environment among others. These results may also be attributable to the unique characteristics of the subject matter (trochaic poetry). It is possible than a different subject-matter or a different domain of knowledge may have resulted in more differentiation in reported scores.

The SPM is a relatively new assessment device and requires further study on a number of variables to determine its efficacy; however, the results of this study indicate that one substantial objection to its use, that of gender equality of responses, may not be a barrier to producing valid and reliable results.

References

Ben-Shakhar, G., & Sinai, Y (1991). Gender differences in multiple-choice tests: The role of differential guessing tendencies. Journal of Educational Measurement, 28, 23-35.

Bruno, J.E. (1987). Admissible probability measures in instructional management. Journal of Computer-based Instruction, 14(1), 23-30.

Casey, M.B., Nuttall, R.L. & Pezaris, E. (2001). Spatial-Mechanical Reasoning Skills Versus Mathematics Self-Confidence as Mediators of Gender Differences on Mathematics Subtests Using Cross-National Gender-Based Items, 32(1), 28-57

Kirk, D. (1992). Gender issues in information technology as found in schools: Authentic, Synthetic, Fantastic? Educational Technology,32 (4), 28-35.

Klinger, A. (1997). Experimental validation of learning accomplishment. Presented at the ASEE/IEEE Frontiers in Education Conference, July 11, 1997. Retrieved September, 3, 2005, from http://fie.engrng.pitt.edu/fie97/papers/1271.pdf

Kulhavy, R.W., & Stock, W.A. (1989). Feedback in written instruction: the place of response certitude. Educational Psychology Review, 1(4) 279-308

Landa, S. (1976, March). CAAPM: Computer-aided admissible probability measurement on Plato IV

(R-172-ARPA). Santa Monica, CA: Rand.

Linn, M., De Benedictis, T., DeLucchi, K., Harris, A., & Stage, E. (1987). Gender differences in National Assessment of Educational Progress science items: What does "I Don’t Know" really mean? Journal of Research in Science Teaching, 24(3), 267-278.

Linn, M. C., & Hyde, J. S. (1989). Gender, mathematics, and science. Educational Researcher, 18,

17-27.

Mangione, M. (1995). Understanding the critics of educational technology: Gender inequalities and computers. Proceedings of the 1995 Annual National convention of the Association for Educational Communications and Technology (AECT), Anaheim, CA. (ERIC Document Reproduction Service No. ED 383 311).

Moore D.R. (2005). A software architecture for guiding instruction using student’s prior knowledge. Educational Media and technology yearbook 2005. In M., Orey, M.A., Fitzgerald, & R.M., Branch, (Eds.)Volume 29, Libraries Unlimited, Westport, Connecticut

Mory, E.H. (1991). The use of informational feedback in instruction: implications for future research, Educational Technology, Research and Development, 40(3), 5-20

Norman, D. (1988). The design of everyday things, Basic Books: New York

Nelson, T.O. (1988). Predictive accuracy of the feeling of knowing across different criterion tasks and across different subject populations and individuals. In M.M. Gruneberg, P.E. Morris, & R. N. Sykes (Eds.), Practice aspects of memory (Vol. 1, pp. 190-196). New York: Wiley

Scragg, G. & Smith, J. (1998). A study of barriers to women in undergraduate computer science," SIGCSE Bulletin, 30(1), 82-86

Rajagopal, I. & Bojin, N. (2003). A Gendered World: Students and Instructional Technologies, First Monday, 8, URL: http://firstmonday.org/issues/issue8_1/rajagopal/index.html

Reinen, I.J. &. Plomp,T. (1993). Some gender issues in educational computer use: Results of an international comparative study, Computers and Education, 20, 353-365

Skinner, N. F (1983). Switching answers on multiple-choice questions: Shrewdness or shibboleth? Teaching of Psychology, 10, 220-222.

Wainer, H. & Steinberg, L. S. (1992). Sex differences in performance on the mathematics section of the scholastic aptitude test: A bidirectional validity study. Harvard Educational Review, 62(3), 323-36

About the Author

David Richard Moore received his Ph.D. in Instructional Systems Design from Virginia Polytechnic Institute and State University (Virginia Tech) in 1995. His research focuses on instructional designs for concept attainment, interactive multimedia and computer modeling. Most of his research is conducted through specially designed computer-based interactive systems.

David has built simulations and interactive computer-based training for the Federal Aviation Administration; has worked in faculty development for the University of Nevada, Reno; and most recently was the director of Distributed Learning at Portland State University. David is currently an assistant professor of Instructional Technology at Ohio University.

250 McCracken Hall

Ohio University

Athens OH 45701

Phone: 740-597-1322

Email: moored3@ohio.edu